Migrating MySQL to YugabyteDB Using pgloader

We understand that database migrations can be painful. We have helped users successfully migrate from MySQL to YugabyteDB, a PostgreSQL-compatible distributed SQL database. A very popular tool to accomplish this task is pgloader. In this post, we will cover how to migrate both your MySQL schema as well as data to YugabyteDB.

Prerequisites

Before starting the migration there are a few prerequisites you’ll need to address.

You’ll need access to the MySQL database from which you wish to migrate the schema and data. Additionally, you’ll need to have ysqlsh command line connectivity to a running YugabyteDB cluster that you are going to migrate into.

Create a database on the YugabyteDB cluster that you will migrate into from the source cluster. This database needs to match the database name from MySQL. There would not be any tables or data behind the database but pgloader requires the database to exist on the target cluster. You can create the database by following these steps:

# Use ysqlsh to connect to any node of the YugabyteDB cluster ysqlsh --host=<ip> # Create the database create database <name>;

Preparing to Migrate

You would also ideally need a separate migration machine to install and run pgloader. That way you’re not stealing resources from your target or source servers. To install pgloader on this machine, do the following:

1. Install docker. Since we were installing docker on a CentOS machine, we followed these instructions.

2. After installation, start docker by running sudo systemctl start docker.

3. Verify docker was successfully installed by running sudo docker run hello-world upon which you should get the output shown below.

$ sudo docker run hello-world

Hello from Docker!

This message shows that your installation appears to be working correctly.

To generate this message, Docker took the following steps:

1. The Docker client contacted the Docker daemon.

2. The Docker daemon pulled the "hello-world" image from the Docker Hub.

(amd64)

3. The Docker daemon created a new container from that image which runs the

executable that produces the output you are currently reading.

4. The Docker daemon streamed that output to the Docker client, which sent it

to your terminal.

To try something more ambitious, you can run an Ubuntu container with:

$ docker run -it ubuntu bash

Share images, automate workflows, and more with a free Docker ID:

https://hub.docker.com/

For more examples and ideas, visit:

https://docs.docker.com/get-started/Verify Connectivity to Source MySQL DB

Next, ensure that the migration machine is able to connect to the MySQL server, because pgloader will need to communicate with MySQL over port 3306. Also make sure that the username/password used by pgloader and the IP address from which pgloader is connecting has permissions to connect to the MySQL source database.

1. To verify network connectivity you can use telnet <MySQL_ip> 3306.

2. To grant the pgloader ip the permissions to access the database you can run the following command.

>GRANT ALL PRIVILEGES ON *.* TO 'root'@'<pgloader_instance_ip>' WITH GRANT OPTION; flush PRIVILEGES;

3. To add a password for the ip/user combination, use the following command:

SET PASSWORD FOR 'root'@'<pgloader_instance_ip>' =

PASSWORD('<password>');If you see a “failed to connect” message like this after doing the above steps check the access with your MySQL DBA:

2021-04-22T17:33:33.232901Z ERROR #1=mysql: Failed to connect to #1# at "172.161.20.87" (port 3306) as user "root": MySQL Error [1045]: "Access denied for user 'root'@'ip-172-161-27-195.us-east-2.compute.internal' (using password: YES)" 2021-04-22T17:33:33.232990Z LOG report summary reset table name errors rows bytes total time ----------------- --------- --------- --------- -------------- fetch meta data 0 0 0.000s ----------------- --------- --------- --------- -------------- ----------------- --------- --------- --------- --------------

Verify Connectivity to Target YugabyteDB Cluster

The final check is to make sure the pgloader instance is able to reach the YugabyeDB cluster. For this we’ll need to check to make sure the pgloader instance is able to communicate with one of the YugabyteDB nodes across port 5433.

1. To verify connectivity run telnet <YugabyteDB_node_ip> 5433.

Installing pgloader (Docker)

docker pull yugabytedb/pgloader:v1.1 docker run --rm --name pgloader yugabytedb/pgloader:v1.0 pgloader --version

You should see the following response:

pgloader version "3.6.3~devel" compiled with Clozure Common Lisp Version 1.11.5/v1.11.5 (LinuxX8664)

For pgloader command flags:

docker pull yugabytedb/pgloader:v1.1 docker run --rm --name pgloader yugabytedb/pgloader:v1.1 pgloader --help

pgloader [ option ... ] command-file ... pgloader [ option ... ] SOURCE TARGET --help -h boolean Show usage and exit. --version -V boolean Displays pgloader version and exit. --quiet -q boolean Be quiet --verbose -v boolean Be verbose --debug -d boolean Display debug level information. --client-min-messages string Filter logs seen at the console (default: "warning") --log-min-messages string Filter logs seen in the logfile (default: "notice") --summary -S string Filename where to copy the summary --root-dir -D string Output root directory. (default: #P"/tmp/pgloader/") --upgrade-config -U boolean Output the command(s) corresponding to .conf file for v2.x --list-encodings -E boolean List pgloader known encodings and exit. --logfile -L string Filename where to send the logs. --load-lisp-file -l string Read user code from files --dry-run boolean Only check database connections, don't load anything. --on-error-stop boolean Refrain from handling errors properly. --no-ssl-cert-verification boolean Instruct OpenSSL to bypass verifying certificates. --context -C string Command Context Variables --with string Load options --set string PostgreSQL options --field string Source file fields specification --cast string Specific cast rules --type string Force input source type --encoding string Source expected encoding --before string SQL script to run before loading the data --after string SQL script to run after loading the data --self-upgrade string Path to pgloader newer sources --regress boolean Drive regression testing

Setting Up pgloader Command File

Instead of using the pgloader command line SOURCE TARGET with options, we use a command file to simplify the docker command.

load database from mysql://root:password@172.161.20.87:3306/testdb into postgresql://yugabyte:yugabyte@172.161.30.169:5433/testdb WITH max parallel create index=1, batch rows = 1000;

Running pgloader

pgloader Schema and Data Migration

The docker image is based on centos7. We can “bash” into the container and run the pgloader command (/usr/local/bin/pgloader). In this doc, we store the pgloader command-file in “centos” home directory and use docker volume to map the configuration from centos home directory to docker container directory. The docker flag “–rm” will make sure the container is removed once the pgloader job is done.

docker run --rm --name <name_for_container> -v <local_dir pgloader_config_dir>:<mount_path_in_container> yugabytedb/pgloader:v1.1 pgloader <mount_path_in_container>/<pgloader_config_file>

Ex. pgloader command

[root@ip-172-161-27-195 centos]# pwd /home/centos [root@ip-172-161-27-195 centos]# ls pgloader.conf [root@ip-172-161-27-195 centos]# docker run --rm --name pgloader1 -v /home/centos:/tmp yugabytedb/pgloader:v1.1 pgloader -v -L /tmp/pgloader.log /tmp/pgloader.conf

We should get the below output when the pgloader is running.

2021-04-22T18:49:00.000672Z LOG pgloader version "3.6.3~devel" 2021-04-22T18:49:00.264485Z LOG Migrating from #<MYSQL-CONNECTION mysql://root@172.161.20.87:3306/testdb #x302001D3B50D> 2021-04-22T18:49:00.264662Z LOG Migrating into #<PGSQL-CONNECTION pgsql://yugabyte@172.161.20.43:5433/testdb #x302001D3B3AD>

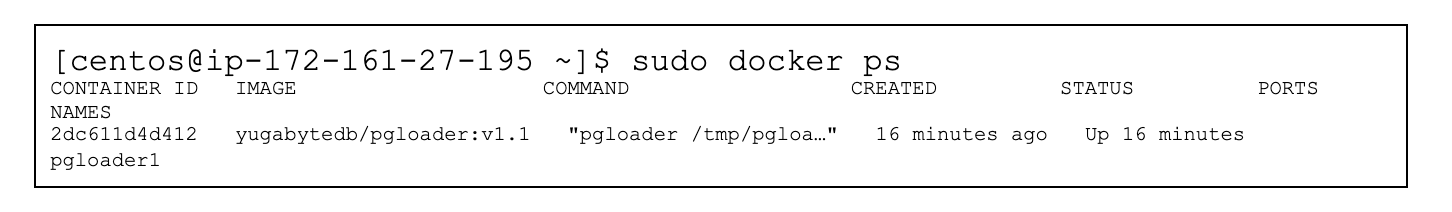

We can also double check the pgloader is running by running docker ps.

We can also tail the pgloader.log we specified in the docker command.

[centos@ip-172-161-27-195 ~]$tail -f pgloader.log

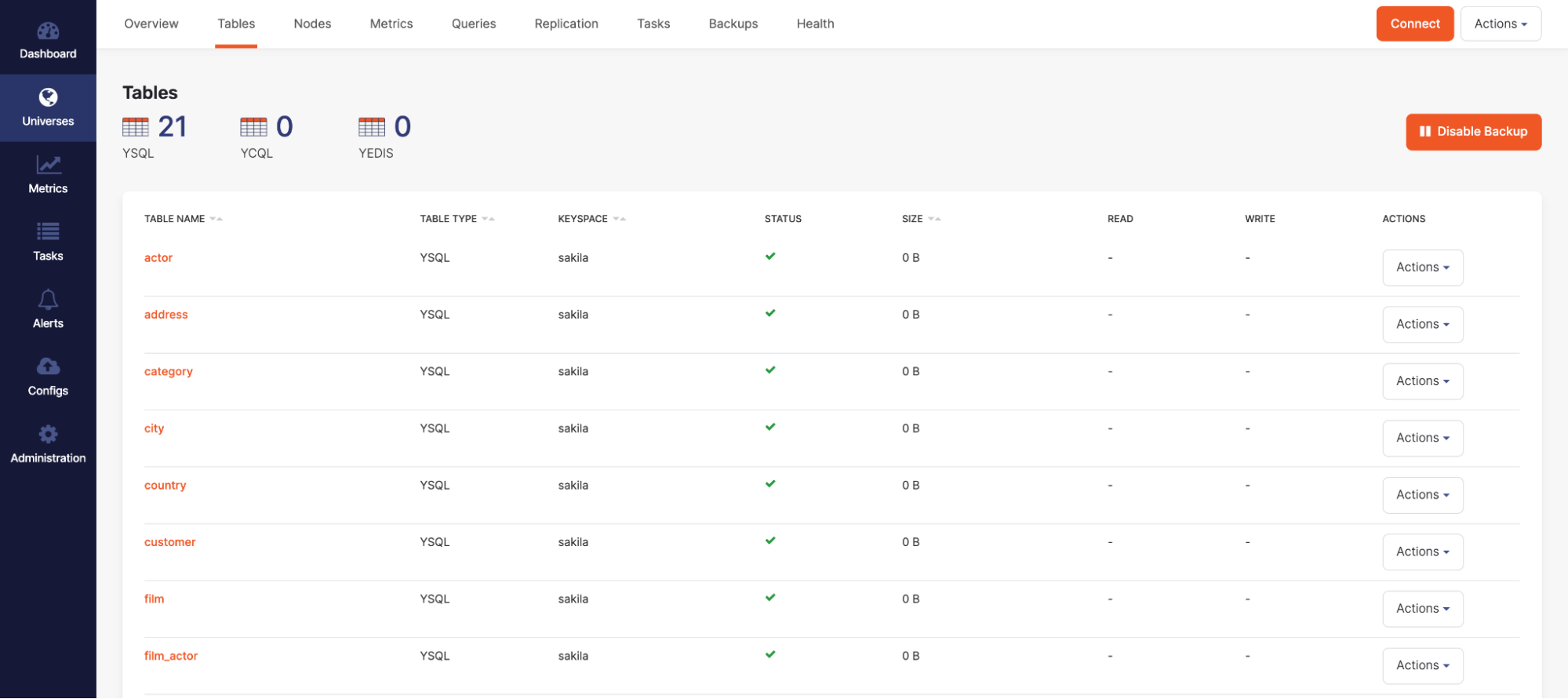

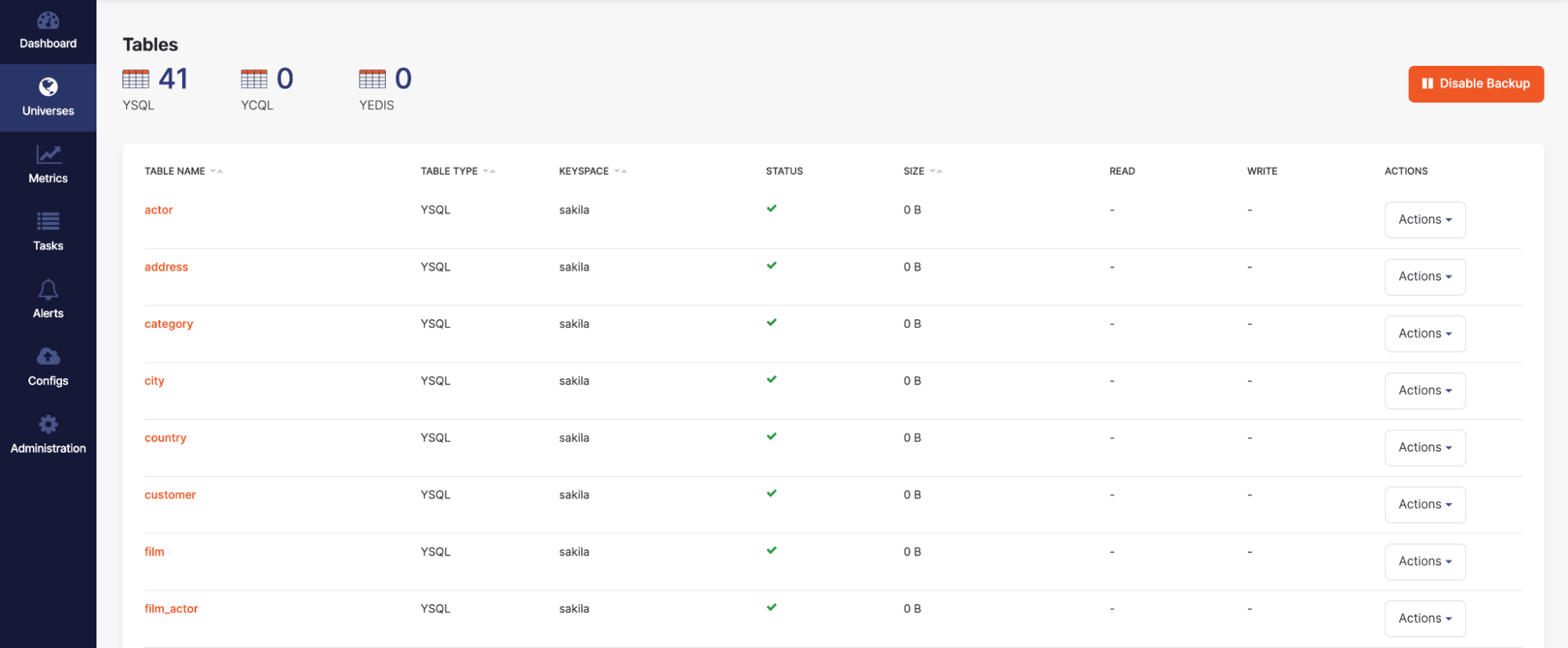

We can check where the pgloader is currently at by going to the platform UI and checking the tables section to see the tables start to load in.

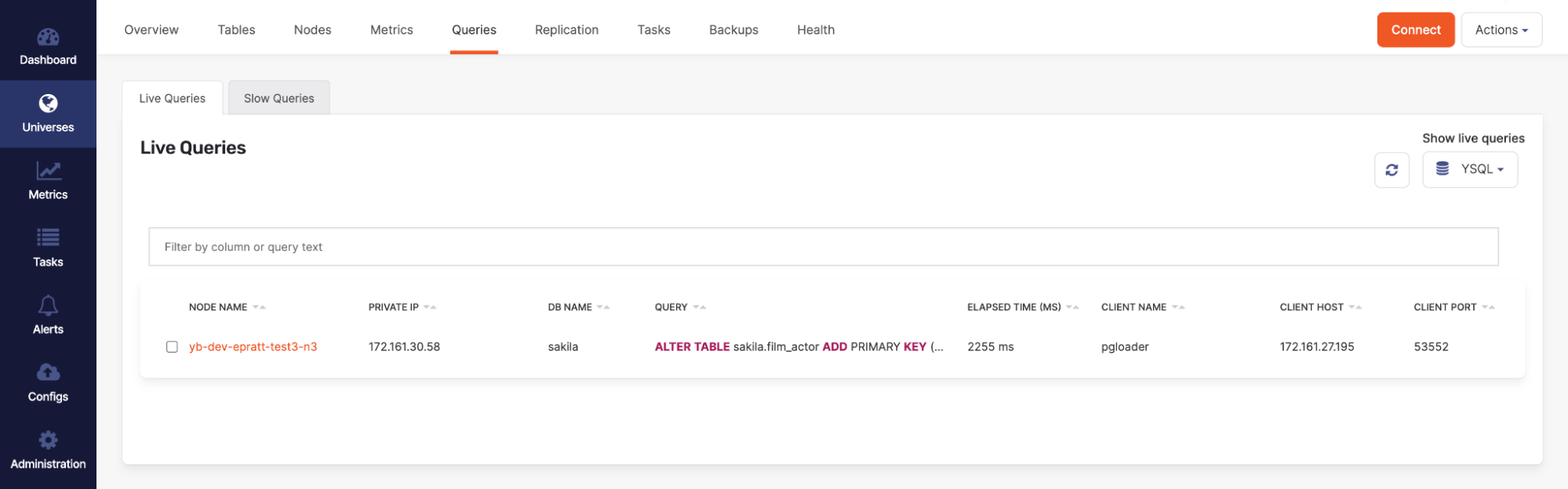

In addition you can check the live queries and see current queries/ddl changes being made on the cluster at that time.

pgloader Schema Migration Only

If you only wanted to migrate the schema from MySQL to YugabyteDB and not include the data, you can add WITH schema only to the pgloader command file and pgloader will only load the schema.

load database from mysql://root:password@172.161.20.87:3306/testdb into postgresql://yugabyte:yugabyte@172.161.30.169:5433/testdb WITH max parallel create index=1, batch rows = 1000, schema only;

Validation

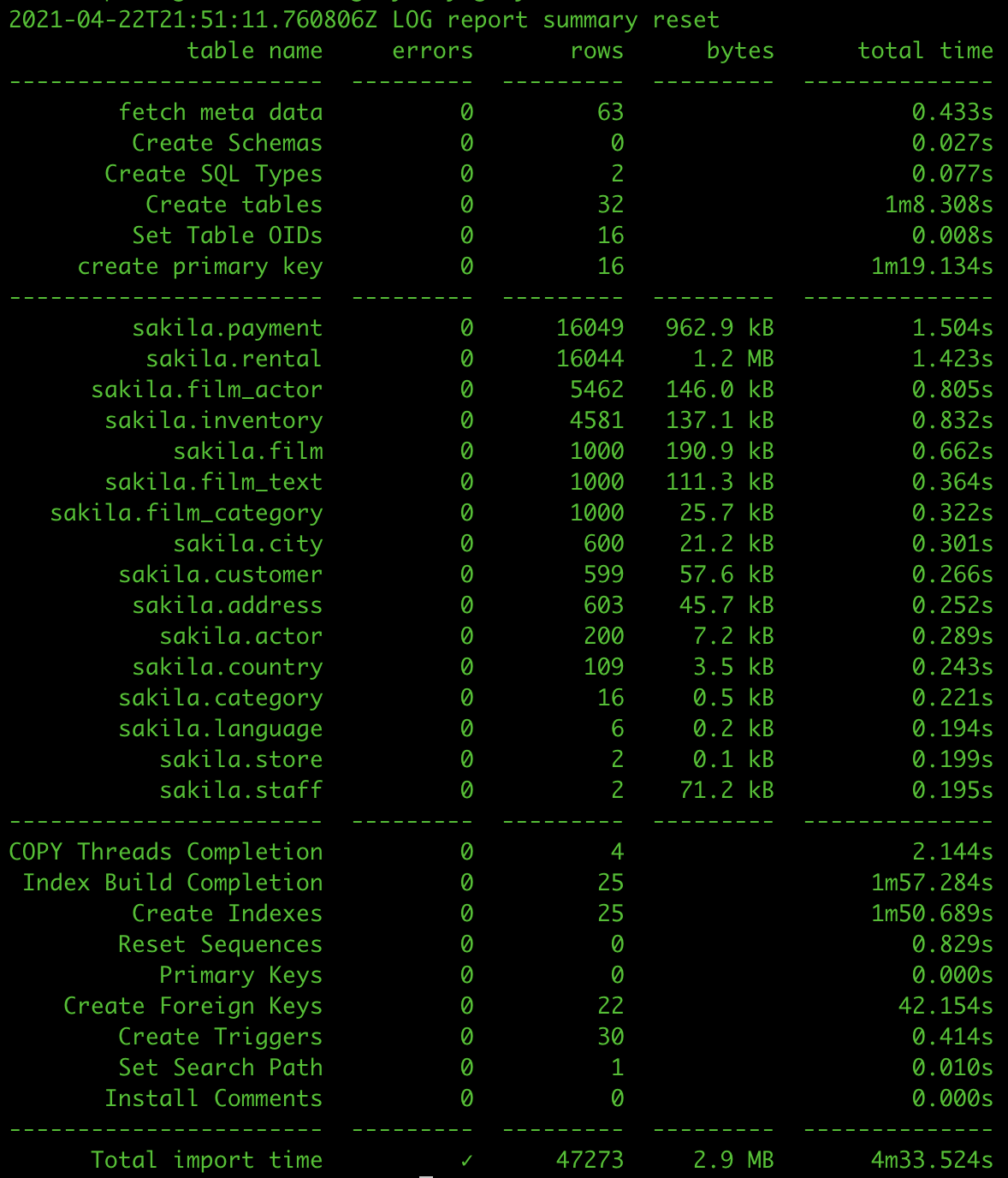

Once pgloader finishes the migration, you will get a summary of the migration steps which includes how long each step took and the number of rows inserted.

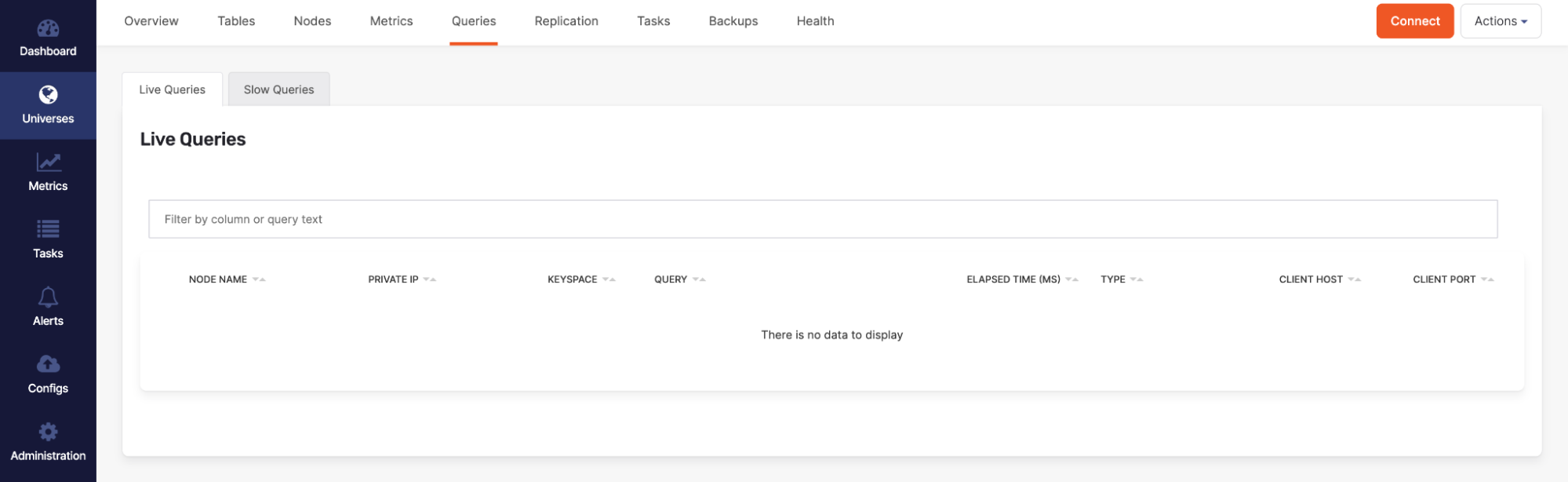

Alternatively you can also check the platform to make sure all the tables are present by looking under the tables tab and making sure there are no active queries migration queries against the cluster (index creation, index backfill, copy from, create table, etc.).

Conclusion

The ability to migrate seamlessly to a new database helps to take the pressure off moving to a new technology. With the changes we’ve made to pgloader at Yugabyte to enable users to easily move your current MySQL database onto YugabyteDB, we relieve that headache. A special thanks to Taylor Mull and Stanley Sung for their collaboration with the creation of this post. As always we are here to help and answer any questions you may have. Join us on our community Slack channel, and star us on GitHub.