Multi-Cloud YugabyteDB in Practice

Several recent regional and cloud-wide outages have made multi-cloud solutions more appealing.

In this blog, we explore the benefits of these architectures, the considerations to keep in mind, and practical guidance for deploying a multi-cloud YugabyteDB universe.

Requirements

Like many distributed systems, YugabyteDB relies on data sharding and replication across multiple nodes. Every node is considered active, providing the system with great scalability and cost-effectiveness. The loss of a node or even a location does not affect overall availability.

Selecting node locations is a trade-off between resilience and responsiveness. The system’s inherent resilience depends upon ensuring that data is written to multiple nodes. The further apart the nodes are, the slower each write will be.

| Locations | Resilient to |

|---|---|

| One availability zone | Node/server failure |

| Multiple availability zones | Node and availability zone failure |

| Multiple regions | Node, availability zone, and region failure |

| Multiple clouds | Node, availability zone, region, and cloud-wide failure |

Nodes must be able to discover and communicate with one another regardless of their location. The overall performance of the system depends upon network throughput (how much data we can send at once) and latency (how long it takes to get from node to node).

Geography

Leveraging several cloud providers together provides greater resilience. Entirely independent infrastructures are unlikely to be affected in the event of another failure.

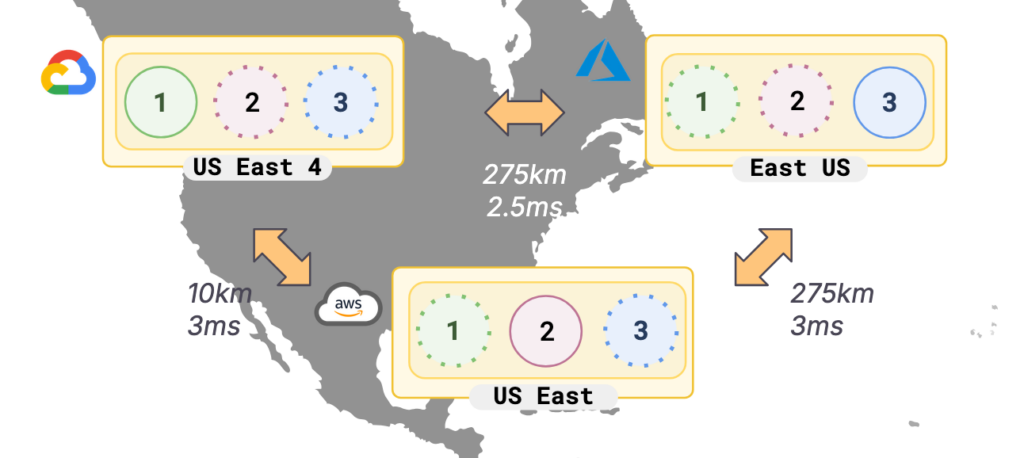

Multiple major cloud providers have a presence either in the same geographic region or close by.

For example, Amazon, Azure, Google, and Oracle offer cloud services in London, UK, and North Virginia, US. They often also have transit network connections in these locations, making network latency between them lower (<4ms) than between different regions in the same cloud.

There are also many cases where a combination of regions from different providers, even if slightly further apart, can offer lower network latency than regions from just one provider. This allows for a more flexible balance between resilience and performance.

Having nodes that span (relatively) close regions increases the risk of political or environmental impact, but reduces the impact of technical failures by a provider.

Costs

It may seem that you should immediately start planning for a multi-cloud future, but the downside of this is cost. While compute and storage resources are broadly the same price across providers, using multiple providers will incur higher data egress charges.

Some cloud providers charge per GiB for network traffic leaving even an availability zone. All charge more when it leaves the region, and more still when it leaves the cloud. Egress charges vary, but you can expect to pay around:

| Availability Zone | Region | Continent | Cloud |

|---|---|---|---|

| $0.01 per GiB | $0.02 per GiB | $0.05 per GiB | $0.09 per GiB |

Multi-cloud resilience has a higher price tag than multi-region or multi-AZ resilience.

Network Traffic

Writes to a YugabyteDB universe require data to be replicated to nodes in other locations, according to the universe’s replication factor (RF).

Typically, three replicas are maintained; therefore, a single write to a multi-cloud universe is sent to all three clouds. To ensure consistency, messages are sent back and forth between nodes and the client. Writing 1KiB of data will result in much higher network traffic.

YugabyteDB offers node-to-node network compression. The best algorithm is gzip, which results in a compression factor of around three and significantly reduces network traffic between nodes. This has a very positive impact on network egress charges, even with a minor increase in CPU usage (less than 5%).

Another feature that can reduce network traffic is follower reads, which allow data to be read from the node to which a client connects, rather than having to traverse to a leader (a primary replica) in another cloud.

It is also possible to place all primary replicas in one cloud, further reducing cross-cloud network traffic for writes.

Backups are distributed between the nodes in a universe. For an RF3 multi-cloud universe, each cloud will contribute approximately one-third of a backup’s data to a storage container in a single cloud. This means that two-thirds of the backup data will leave its source cloud.

Inter-Cloud Connectivity

There are many options for network connectivity between clouds, particularly in hub locations with presence from multiple providers.

Site-to-site VPNs can be configured to securely route cross-cloud traffic over the internet. This has a low setup cost but will incur data egress charges to the internet per GiB.

Dedicated interconnects are more expensive to set up, but incur lower egress charges. They also have slightly lower latency and greater security thanks to private routing.

Typically, egress charges for private interconnects are the same as regional egress (~$0.02/GiB) rather than cloud egress (~$0.09/GiB). The interconnect cost varies by cloud, resulting in a different breakeven cost per cloud. In particular, Google Cloud offers only dual 10Gbps interconnects, which are more expensive than AWS and Azure’s cheaper options.

Real World Usage

To help understand the costs associated with different levels of resilience, let’s take two example workloads.

Both involve transactions consisting of two reads and two writes, totaling around 5KiB of data. The universes have a replication factor of 3, and all nodes are within the same continent.

| Workload 1 | Workload 2 | |

|---|---|---|

| Transactions per second | 250 | 2,500 |

| Transactions per day | 21.6m | 216m |

| Compute | $450 | $2,500 |

| Multi-AZ network egress | $35 | $375 |

| Multi-region network egress | $100 | $1,000 |

| Multi-cloud network egress | $540 | $5,400 |

All costs are approximate, monthly, and do not include storage or backups, which can vary (but would typically be lower than compute).

Workloads with similar or higher throughput than Workload 2 in a multi-cloud topology would benefit from private cloud interconnects at a cost of $2,000 to $2,500. This would reduce network egress charges to that of a multi-region universe – $3,500 rather than $5,400 in this case.

These example costs can be further reduced through follower reads and preferred regions. Read-intensive workloads will be much cheaper, as will workloads with smaller transactions or lower throughputs.

Broadly, you might expect a multi-cloud topology to incur network egress charges roughly equivalent to its compute charges. This, of course, is heavily dependent on the workload profile, with read-heavy applications being comparatively cheaper.

Conclusion

Multi-cloud architectures offer compelling advantages:

- Resilience to node, AZ, region, and cloud failures

- Lower latency/higher throughput than multi-region universes

YugabyteDB offers several options to mitigate costs:

- Network traffic compression significantly reduces the size of data being sent between clouds

- Follower reads allow data to be read from whichever cloud a client connects to, reducing the inter-node communication between clouds

- Preferred regions allow one cloud to host all primary replicas, further reducing cross-cloud traffic

A dual-cloud architecture can provide multi-region resilience with greater performance, due to closer proximity. This is useful in scenarios where data must remain within a specific geographical boundary and is cheaper than a multi-cloud approach.