Comparing the Maximum Availability of YugabyteDB and Oracle Database

When migrating from Oracle to PostgreSQL, the main concern is not the SQL features—because PostgreSQL is good enough for many applications and has an active community pushing new features. Instead, the biggest issue is achieving the high availability (HA) Oracle administrators have come to expect from their critical databases. PostgreSQL upgrades require downtime, and PostgreSQL protection involves data loss. Oracle, however, has a long history of avoiding data loss and downtime due to its multiple availability options.

The good news is that YugabyteDB delivers the same SQL features found in PostgreSQL, on an engine built for resilience and scalability. This ensures you can achieve the highest level of protection available for your database, without additional complexity.

Finding one-to-one mapping of the differences between monolithic and cloud-native databases is difficult. So, in this article, we will compare YugabyteDB and Oracle (RAC, Data Guard) based on availability objectives. First, we’ll examine the available options of each database.

YugabyteDB and Oracle Availability Options

Oracle

Oracle is a monolithic database. All sessions must write into one place—the current version of the block that holds the row. Throughout all versions, Oracle has implemented the following four features to provide better resilience (in the case of hardware failure) and increased availability (in the case of software upgrades).

- Oracle RMAN (Recovery Manager) manages online backups, detects problems, and makes restorations in case of disk corruption or failure. Data is protected, but a high recovery time is needed (RTO—Recovery Time Objective—measured in hours or days). Data loss is reduced by backing up archived logs (RPO—Recovery Point Objective—usually measured in hours). This is possibly lower with additional hardware such as a ZRLA—Zero Data Loss Recovery Appliance.

- Oracle RAC (Real Application Cluster) sits on top of a specific clusterware (CRS) and allows multiple servers to open the same database without split-brain. However, this doesn’t protect you from disk failure and only allows rolling upgrades for the limited number of patches that do not change the database. Why? Because it is still a shared monolithic database. However, it provides a high availability service since it protects against server failure and allows limited scale-out. This is limited by distance since cache and disks are shared and by performance since blocks are exchanged between nodes. RTO and RPO are minimal, but this is a server concern, not database. Combined with ASM (Automatic Storage Management), disk redundancy is provided within the same limit (cluster within the same availability zone).

- Oracle Data Guard is the streaming physical replication to a standby database. It protects the database like a backup, but reduces the restore time when it needs to be activated. It was initially designed for disaster recovery (DR), with asynchronous replication (ASYNC) to reduce the impact on the primary but with data loss in case of failover. When network latency allows it, Data Guard can also replicate in SYNC to avoid data loss (RPO=0). With an additional Observer to enable FSFO (Fast Start Failover), the failover requires no human intervention, reducing the RTO to minutes. With an additional option (ADG—Active Data Guard) the standby database can be used for some operations (read-only sessions, incremental backups, etc.). However, all standby sites, even passive, must be licensed with all options. They may run with limited CPU until it needs to be activated to reduce costs, but this increases RTO.

- Oracle GoldenGate is a streaming transactional logical replication solution. It is the only one that provides a multi-master (active-active) setup but, being at a logical level, requires careful design to avoid replication conflicts and schema changes. It can fill gaps in HA features, including online upgrades and 2-way replication for the most critical applications with thorough design and monitoring.

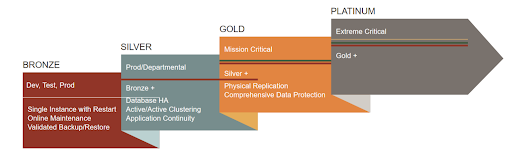

These four features are under the umbrella of Oracle’s MAA (Maximum Availability Architecture) with different levels (bronze/silver/gold/platinum) of protection (and pricing). We will compare each of the levels to YugabyteDB.

YugabyteDB

YugabyteDB is a single coherent database distributed across multiple servers, sharing only the network. Replication is built in the Raft protocol, which is used to distribute data and transactions between two layers:

- YSQL—the SQL processing layer

- DocDB—the distributed key-value storage

Here, Yugabyte combines the advantages of physical replication and logical replication. It is physical for YSQL since it contains the redo logs for row changes (WAL). It is logical for DocDB since it is indexed on the key in the LSM-Tree rather than a physical location in SST files.

A unique technology protects against server crashes, disk failure, network partition, or the total loss of a data center, an availability zone, or a cloud region.

As it is distributed with all nodes active, there is no downtime during rolling upgrades. And, since data is stored in append-only WAL and SST files, once a block is written, it will never change (as opposed to Oracle or PostgreSQL B-Tree and heap tables where existing blocks are continuously modified). Once verified with checksum, during the background compaction, SST files cannot be corrupted later as they become immutable.

Because YugabyteDB is cloud-native, it has been designed specifically for cloud environments, where any part can fail and must be recovered quickly without human intervention.

YugabyteDB also provides asynchronous replication on top of a synchronous cluster, using a common technology (Raft log and MVCC key-value changes) to extend scalability without impacting writes. This includes Read Replicas (for reporting, similar in usage to Oracle Active Data Guard) and xCluster (for reporting or disaster recovery, similar in usage to Oracle GoldenGate).

When we compare Oracle and YugabyteDB, we need to consider every Oracle MAA level, (each of which has unique availability options on top of the database), to the single YugabyteDB database, where replication, sharding, and clustering are intrinsic capabilities. The best and easiest way to do so is via the table below.

Database Comparisons

Comparing YugabyteDB and each Oracle MAA level:

YugabyteDB

Built-in features and all open source | Oracle Database Maximum Availability Architecture  | ||||

|---|---|---|---|---|---|

| 🚀 YugabyteDB | 🥉 MAA Bronze | 🥈 MAA Silver | 🥇 MAA Gold | 💰 MAA Platinum | |

| Main Goal | Cloud-Native Resilience | Backup | Bronze + HA | Silver+ DR | Gold + Multi-Master |

| HA/DR product | Native in YugabyteDB core | RMAN | RAC | RAC + Data Guard (ADG) | RAC + DG + GoldenGate |

| RPO (Recovery Point Objective) | 0 (no data loss thanks to sync replication to the quorum) | Minutes (archivelog backups) | Same as Bronze (no additional DB protection) | 0 (in Sync) | Seconds if no lag |

| RTO (Recovery Time Objective) | 3 seconds for Raft Leader re-election (+TCP timeout if they have to re-connect) | Hours to days depending on the size of database | Seconds for server crash, but no database protection | Minutes with FSFO (Sync) | A few seconds if no conflict (2-way) |

| Corrupt Block Detection | Automatic detection and repair during compaction | RMAN detection + manual blockrecover | No additional protection | Automatic with lost write detection | No additional protection |

| Online Upgrade | Zero downtime | Minutes to hours for major releases | Some minor upgrades are rolling (OS, patch) | Near zero downtime with transient logical | Yes, if 2-way replication |

| Human Error | Snapshots schedule (demo) | Flashback Database, Snapshot Standby, Log Miner, Flashback Query, Flashback Transaction, dbms_rolling, dbms_redefinition, ASM | |||

| Online DLL | Work in progress (#4192) | No transactional DDL, but EBR (Edition Based Redefinition) | |||

| Active-Active | Yes, distributed and ACID across the whole cluster. Additional async read replicas, and cross-cluster logical replication, are possible | No, the spare server is shut down (must be licensed if up >10 days a year) | Within interconnect and shared disk only (same data center), yes | No. The standby can be read with Active DG option, but not ACID and no writes | Yes, if 2 way replication without ACID guarantees and with manual or rule based conflict resolution |

| Application Continuity | With HA proxy or Smart Driver, application connections may have to re-start some queries or re-connect immediately | CMAN (proxy) | Transparent failover and transaction guard | ||

| Complexity | None (built-in cluster replication) | Small | High (clusterware) | Medium (in addition to RAC) | High (as any logical replication) |

| Price (Software) | Free and open-source | Enterprise Edition (50K/CPU) | EE + 50% (RAC option) | EE + RAC + 25% (ADG option) | EE + RAC + ADG + 75% (100% but ADG is included) |

Want More Technical Information? Let’s Dive into Distributed SQL Replication Differences

YugabyteDB distributes data and transaction processing to the database nodes, which are all equal and store their own shards (tablets) and replicas (tablet peers). Filtering rows and columns and applying changes happen next to the storage, by each tablet server.

In contrast, Oracle RAC moves data (database blocks) to the processing nodes so they can work on it like a typical monolithic database. Here, the session’s process is written directly to the shared buffer pool. This is possible only on a low latency network like the interconnect inside an Exadata database machine and Oracle Cloud Infrastructure. It is not supported on other public cloud providers, nor can it scale out to multiple machines in a shared-nothing cloud. Extending the RAC cluster to multiple failure zones is possible with Oracle ASM—in a stretched cluster—but again, this is limited to a low-latency network.

All this happens in one physical database. It can be mirrored to another Availability Zone with Data Guard, but all data files are the same, bit for bit, and only one site is active. To go further, you would need to add GoldenGate. This active-active configuration is possible only with complex replication conflict handling or application sharding. As a result, all technologies must be combined to provide maximum availability.

The Oracle MAA combines great technology developed, or bought, by Oracle through the last 30 years of commercial RDBMS growth. With the rise of web applications and cloud infrastructure, the shared-nothing databases (NoSQL and NewSQL) have become the standard for scaling out. Replication and sharding are the foundation of distributed SQL with Google Spanner, rather than adding a bunch of additional options on top of an SQL database.

YugabyteDB simplifies this setup by separating the stateless SQL layer (the PostgreSQL backend, which is also the client to DocDB) by:

- Automatically sharding tables and indexes to tablets

- Distributing operations (writes, locks, secondary index maintenance, reads, expressions, and aggregate pushdowns) as key-value pairs to the tablet peers in DocDB.

High availability and high performance in YugabyteDB is not an additional component, as in traditional databases. Instead, it is a simple replication factor setting, with optional placement information declared at the database or tablespace level that maps to cloud providers, regions, and zones. This is why the comparison table above has only one column for YugabyteDB and four for Oracle MAA.

Conclusion

Yugabyte provides users with PostgreSQL compatibility, and provides all of the high availability features usually only available in commercial databases. They are part of the core of YugabyteDB and are fully open source.

This article and comparison table should help you understand how Oracle MAA options map to YugabyteDB’s intrinsic features when considering migration projects.

For more insight into how YugabyteDB’s resilience ensures application continuity during cloud outages, check out these recent customer stories:

- How Plume Handled Billions of Operations Per Day Despite an AWS Zone Outage

- How a Fortune 500 Retailer Weathered a Regional Cloud Outage with Yugabyte DB

This comparison was written in July 2022 by Franck Pachot, Developer Advocate at Yugabyte. Franck has 20 years of experience consulting on Oracle Database (Oracle Certified Master, Oracle ACE Director), Co-author of “Oracle Database 12c Release 2 Multitenant” by Oracle Press). Features may change with future releases of both databases.