Distributed SQL Tips and Tricks – Jan 24, 2020

Welcome to this week’s tips and tricks blog where we recap some distributed SQL questions from around the Internet. We’ll also review upcoming events, new documentation and blogs that have been published since the last post. Got questions? Make sure to ask them on our YugabyteDB Slack channel, Forum, GitHub or Stackoverflow. Ok, let’s dive right in:

What is the performance impact of deleting tombstones in YugabyteDB?

The impact of a large number of deletes/overwrites to a key is pretty minimal in YugabyteDB. The reasons are multifold:

First, the read operation in a LSM engine is done by performing a logical merge of memtables/SSTables that are sorted in descending timestamp order for each key. In effect, the read will see the latest value of the row first, and the overhead of deletes (which show up further down in the logical sort order) should not be observable at all.

Lastly, flushes and minor compactions only need to retain the latest deleted or overwritten value. All other overwrites can be garbage collected immediately. This activity doesn’t need to wait for a major compaction. This is unlike Apache Cassandra, which does an eventually consistent replication and therefore, to avoid the problem of deleted values resurfacing, must retain deleted tombstones for much longer. Because YugabyteDB uses the Raft protocol for strongly-consistent replication, no special such handling is needed for deletes.

How does YugabyteDB achieve high data density per node?

YugabyteDB stores its data in DocDB, a distributed document store built with inspiration from Google Spanner. DocDB’s per-node storage engine is a customized fork of RocksDB. This fact enables a number of optimizations related to data density, such as:

- Block-based splitting of bloom/index data: RocksDB’s index and bloom filters have been enhanced in YugabyteDB to be multi-level/block-oriented structures so that these metadata blocks can be demand-paged into the block cache much like data blocks. This enables YugabyteDB to support very large data sets in a RAM efficient and memory allocator friendly manner.

- Size-tiered compactions: YugabyteDB’s compactions are size tiered. This has the advantage of lower disk write (IO) amplification compared to level compactions. The space amplification concern of using size-tiered compactions does not hold true in YugabyteDB because each table is broken into several shards, and the number of concurrent compactions across shards is throttled. As a result, the typical space amplification in YugabyteDB tends to be not more than 10-20%.

- Smart load balancing across multiple disks: DocDB supports a just-a-bunch-of-disks (JBOD) setup of multiple SSDs and doesn’t require a hardware or software RAID. The RocksDB instances for various tablets are balanced across the available SSDs uniformly, on a per-table basis.

- Efficient C++ implementation: There is no “stop-the-world” GC that needs to happen, which helps keep latencies low and consistent.

- On-disk block compression: This capability lowers read/write IO while an in-memory uncompressed block cache results in very low CPU overhead and latency.

- Compaction throttling & queues: Globally throttled compactions and small/big compaction queues help mitigate against compaction storms overwhelming the server.

For more details check out the DocDB documentation and the blog, “Enhancing RocksDB for Speed & Scale”.

Are there limitations when working with collection data types in the YCQL API?

As in Apache Cassandra, YugabyteDB uses collection data types to specify columns for data objects that can contain more than one value. Because YugabyteDB uses synchronous Raft replication, all DML operations are atomic and transactional, so YugabyteDB doesn’t suffer from inconsistencies when making updates on collection columns. Please note that collections are still recommended to be used primarily with small datasets.

What are the maximum number of rows YugabyteDB can support?

The theoretical limit in YugabyteDB is likely to be 2^64 – 1, because queries like select count(*) return a int64. For example, in the experiment described in this post, 20 billion rows were successfully loaded into YugabyteDB.

New Documentation, Blogs, Tutorials, and Videos

New Blogs

- How to Migrate the Sakila Database from MongoDB to Distributed SQL with Studio 3T

- Four Data Sharding Strategies We Analyzed in Building a Distributed SQL Database

- An Introduction to PostgreSQL Table Functions in YugabteDB – Part 1

- Implementing PostgreSQL User-Defined Table Functions in YugabyteDB – Part 2

- Four Compelling Use Cases for PostgreSQL Table Functions – Part 3

- Announcing the Kafka Connect YugabyteDB Sink Connector

New Videos

- Developing Cloud-Native Spring Microservices with a Distributed SQL Backend

- How to Upgrade a Local YugabyteDB Cluster

- YugabyteDB Operational Best Practices

- Getting Started with Local Installs, Sample Databases and Tools

- Getting Started with PostgreSQL’s Authentication and Authorization

- Getting Started with PostgreSQL’s Row-Level Security

- Designing a Change Data Capture and Two Data Center Architecture for a Distributed SQL Database

New and Updated Docs

- Raft options (for yb-master and yb-tserver)

- Helm chart

- Monitoring with Prometheus

- rpc-bind-addresses

- server-broadcast-addresses

- 6 new FAQs have been added to the collection

Upcoming Meetups and Conferences

PostgreSQL Meetups

- Feb 20: Silicon Valley PostgreSQL Meetup

Distributed SQL Webinars

- Watch now: Developing Microservices with the Google Hipster Demo, Istio and a Distributed SQL Database

Conferences

- Postgres Conference, March 23-27, 2020, New York

- KubeCon + CloudNativeCon Europe, March 30 – April 2, 2020, Amsterdam

- Google Cloud Next, April 6-8, 2020, San Francisco

- Red Hat Summit, April 27-29, 2020, San Francisco

We Are Hiring!

YugaByte is growing fast and we’d like you to help us keep the momentum going! Check out our currently open positions:

- Software Engineer – Cloud Infrastructure – Sunnyvale, CA

- Software Engineer – Core Database – Sunnyvale, CA

- Software Engineer – Full Stack – Sunnyvale, CA

- Developer Advocate – Sunnyvale, CA

Our team consists of domain experts from leading software companies such as Facebook, Oracle, Nutanix, Google and LinkedIn. We have come a long way in a short time but we cannot rest on our past accomplishments. We need your ideas and skills to make us better at every function that is necessary to create the next great software company. All while having tons of fun and blazing new trails!

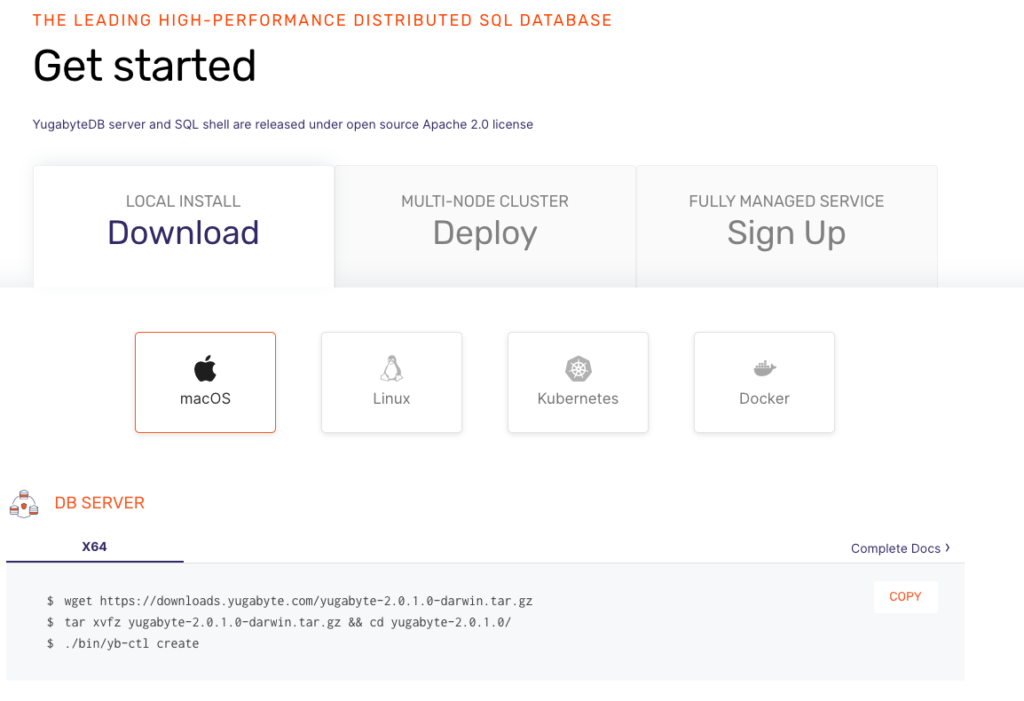

Get Started

Ready to start exploring YugabyteDB features? Getting up and running locally on your laptop is fast. Visit our quickstart page to get started.

What’s Next?

- Compare YugabyteDB in depth to databases like CockroachDB, Google Cloud Spanner and MongoDB.

- Get started with YugabyteDB on macOS, Linux, Docker and Kubernetes.

- Contact us to learn more about licensing, pricing or to schedule a technical overview.