Integrate YugabyteDB Logs into Splunk for Comprehensive Monitoring and Analysis

In the world of modern data-driven applications, monitoring and analyzing logs is vital to ensure system performance, identify issues, and gain valuable insights. Splunk, a leading platform for log management and analytics, offers powerful capabilities to aggregate, search, and analyze logs from various sources. In this blog, we’ll explore the benefits of exporting YugabyteDB logs to Splunk and provide a detailed step-by-step guide for doing just that.

Now why should users export their YugabyteDB logs to Splunk? YugabyteDB logs contain critical information about database operations, performance, and potential issues. Exporting these logs to Splunk enables comprehensive analysis and troubleshooting. Integrating YugabyteDB logs into Splunk consolidates monitoring efforts by correlating YugabyteDB logs with logs from other systems for a unified view. Furthermore, Splunk’s alerting capabilities enable real-time alerts based on YugabyteDB log events, ensuring timely notification of potential issues or anomalies.

Before delving into the details, let’s go through a quick overview of Splunk and YugabyteDB logs.

Splunk Cloud Overview

Splunk offers two deployment options: Splunk Enterprise and Splunk Cloud. Splunk Cloud is a fully managed Software-as-a-Service (SaaS) offering, while Splunk Enterprise allows self-hosted deployment—on-premises, or in the cloud. NOTE: This blog will focus on Splunk Cloud.

Splunk Cloud offers several methods to ingest logs and other data types. Some common approaches include Forwarders, Cloud sources, and API integration using Splunk HTTP Event Collector (HEC) API. Forwarders are lightweight agents installed on servers, virtual machines, or other devices to collect and send data to Splunk Cloud.

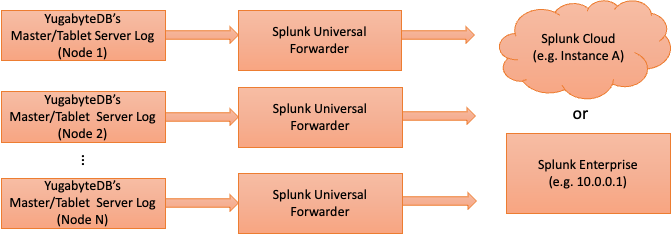

In this blog, we will learn how to export YugabyteDB logs to Splunk Cloud using Splunk Universal Forwarder. The method of exporting YugabyteDB logs is the same for other types of Splunk forwarders.

YugabyteDB Logs

In a YugabyteDB cluster, each node contains various log files that are valuable for monitoring, troubleshooting, and performance analysis. The specific log files and locations may vary based on the installation and configuration of YugabyteDB. Please refer to our Base Folder documentation for the location of YugabyteDB logs. Here are some commonly generated log files and their typical locations:

- yb-tserver logs*: These log files pertain to the Tablet Server component of YugabyteDB and contain information on tablet operations, replication, and Data Manipulation Language (DML) statements such as SELECT, INSERT, UPDATE, DELETE. The default location of these log files is `

<yugabyte-data-directory>/tserver/logs/`. - yb-master logs*: These log files relate to the Master Server component of YugabyteDB, and contain log information on cluster coordination, load balancing, metadata management, and Data Definition Language (DDL) such as CREATE TABLE, DROP TABLE, ALTER TABLE. The default location of these log files is `

<yugabyte-data-directory>/master/logs/`. - postgresql logs : These logs provide insights into the activities, errors, warnings, and events of the YSQL layer. They provide insights on the internal operations of the database, including startup, shutdown, connection events, YSQL statement execution, and error messages. The default location of these log files is `

<yugabyte-data-directory>/tserver/logs/`. If you wish to configure Postgres audit logging using the pgAudit extension, the audit logs will be included in the Postgres logs. For more information on how to set up audit logging, please refer to this video.*Note: yb-tserver and yb-master logs are organized by error severity: FATAL, ERROR, WARNING, INFO.

How to Export Logs to Splunk Cloud from YugabyteDB

So now let’s get started with the integration pattern of exporting logs to Splunk Cloud in a multi-node YubagyteDB cluster. In a YugabyteDB cluster, each node will have yb-tserver logs, yb-master logs, or both, depending on its configuration.

- Install Splunk Universal Forwarder using the steps in the Splunk Forwarder Manual as a guide.

- Install and configure Splunk Cloud universal forwarder credentials package.

- Configure Splunk index for each node in the YugabyteDB cluster as follows:

`$SPLUNK_HOME/bin/splunk add index <indexname>`

where $SPLUNK_HOME refers to the Forwarder’s installation directory.

In Splunk, an index is a repository for storing and retrieving data. It is a logical entity that represents a collection of data, such as log files, events, or other types of machine-generated data. When data is ingested into Splunk, it is assigned to a specific index based on the configuration. Creating an index allows users to efficiently manage, search, analyze, and control access to data within Splunk based on their specific requirements and use cases.

Note: Select the index name that best suits your environment

- Configure Splunk monitor for YugabyteDB log collection on each node as follows:

`$SPLUNK_HOME/bin/splunk add monitor /home/centos/disk-1/yb-data/tserver/logs/ ` `$SPLUNK_HOME/bin/splunk add monitor /home/centos/disk-1/yb-data/master/logs/ `

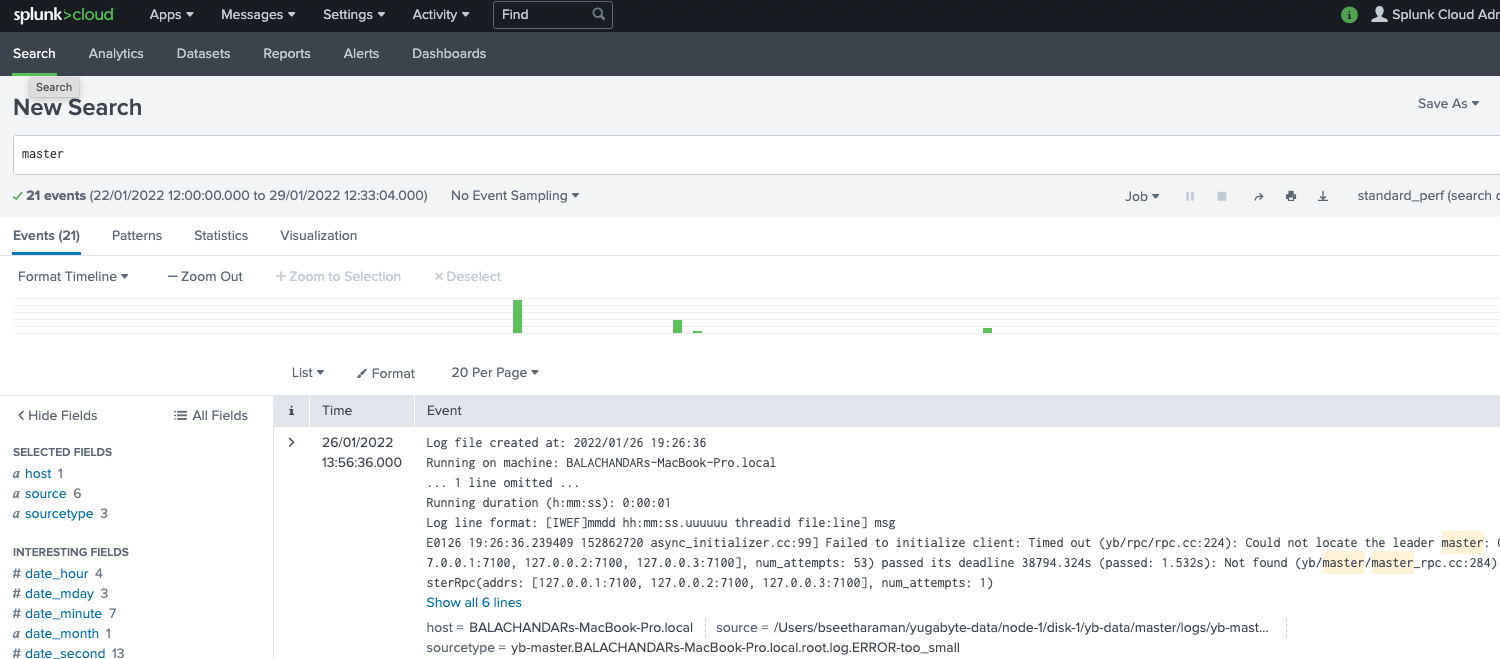

- Validate the export of YugabyteDB nodes on Splunk Cloud by going to “Search” and looking for “master” or “tserver.” YugabyteDB logs will show up. Note: It may take a few minutes for Splunk Cloud to index and display the logs.

YugabyteDB logos on Splunk Cloud dashboard

Conclusion

Timely log analysis is crucial for troubleshooting database performance issues. YugabyteDB offers seamless integration with Splunk, providing a convenient way to incorporate security monitoring, intrusion detection, forensics, and incident response, as well as troubleshooting and performance optimization, into one unified view. By exporting YugabyteDB logs to Splunk, organizations can take advantage of the robust log management and analytics functionalities offered by Splunk.