Distributed SQL Tips and Tricks – Jan 31, 2020

Welcome to this week’s tips and tricks blog where we recap some distributed SQL questions from around the Internet. We’ll also review upcoming events, new documentation, and blogs that have been published since the last post. Got questions? Make sure to ask them on our YugabyteDB Slack channel, Forum, GitHub, or Stackoverflow. Ok, let’s dive right in:

Can I specify the number of tablets I want a table to have?

Yes, this can be done by using the SPLIT INTO clause in the CREATE TABLE statement. This clause specifies the number of tablets that will be created for a given table.

For example:

CREATE TABLE foo(a INT PRIMARY KEY, b text) SPLIT INTO 60 TABLETS;

Wait. What’s a tablet?

A tablet is just another name for a shard. In a YugabyteDB cluster, tables are split into tablets so that the data can be distributed onto various nodes. This “splitting of tables” increases the availability and accessibility of the data.

The SPLIT INTO capability is useful if you know ahead of time how big or small your tables are. For example, most SQL databases end up having a large number of tables, of which most are fairly static and small in size. We can then use SPLIT INTO clause to create a small number of tablets (or even one) for such tables. SPLIT INTO is also useful in 2 Data Center (2DC) deployments since the bootstrapping of these setups require that both clusters have the exact same number of tablets.

Visit the Docs for more info about tablets and how data distribution works.

What data partitioning schemes does YugabyteDB support?

YugabyteDB supports both hash (default) and range partitioning. In YugabyteDB, there are two types of primary key columns:

Hash primary key columns: These columns have 1 or more leading hash partitioned columns. When a table is created in YugabyteDB, the first column is treated as the hash partition column by default. This behavior can, however, be modified by explicit use of the HASH annotation.

For example:

CREATE TABLE orders(customer_id INT, product_id INT, date TIMESTAMP, PRIMARY KEY((customer_id, product_id) HASH));

Range primary key columns: A table can have zero or more range primary key columns and it controls the top-level ordering of rows within a table (if there are no hash partition columns) or the ordering of rows among rows that share a common set of hash partitioned column values. By default, the range primary key columns are stored in ascending order. But this behavior can be controlled by explicit use of ASC or DESC.

In working with application developers and DBAs, we’ve determined that the most popular YugabyteDB use cases requiring scalability often do not need to perform range lookups on the primary key. This is why we picked consistent hash sharding as the default. User identity (user ids do not need ordering), product catalog (product ids are not related to one another) and stock ticker data (one stock symbol is independent of all other stock symbol) are some common examples.

In use cases where range lookups are desired, YugabyteDB allows specifying range sharding. In cases when range queries become important after the data is already loaded into a hash sharded table, a range sharded secondary index can be created on that column. Once the secondary index is rebuilt, range queries would become efficient.

For a more detailed discussion on partitioning schemes, check out, “Four Data Sharding Strategies We Analyzed in Building a Distributed SQL Database.”

How can I release all the internal resources associated with a database session?

The DISCARD ALL command will release all the internal resources associated with a YSQL database session. This would include things like cached query plans, cached sequence-related states and the dropping of temp of tables created during the current session.

What row-level locking options does YugabyteDB support in a SELECT statement?

Row-level locks do not affect data querying if you stick with YugabyteDB’s default snapshot isolation mode; they block only writers and lockers to the same row. YugabyteDB supports the following row-level locking on SELECT statements:

- FOR UPDATE & FOR NO KEY UPDATE both cause the rows retrieved by the SELECT statement to be locked as though for an update. This prevents them from being locked, modified or deleted by other transactions until the current transaction ends. We are in the process of refining the semantics of FOR NO KEY UPDATE. This will behave similar to FOR UPDATE, except that the lock acquired will be weaker: this lock will not block SELECT FOR KEY SHARE commands that attempt to acquire a lock on the same rows.

- FOR SHARE & FOR KEY SHARE both behave similarly to FOR NO KEY UPDATE, except that they acquire a shared lock rather than exclusive lock on each retrieved row. A shared lock blocks other transactions from performing UPDATE, DELETE, SELECT FOR UPDATE or SELECT FOR NO KEY UPDATE on these rows, but it does not prevent them from performing SELECT FOR SHARE or SELECT FOR KEY SHARE. We are also in the process of refining the semantics of FOR KEY SHARE. This will behave similar to FOR SHARE, except that the lock will be weaker: SELECT FOR UPDATE will be blocked, but not SELECT FOR NO KEY UPDATE. A key-shared lock will block other transactions from performing DELETE or any UPDATE that changes the key values, but not other UPDATE, and neither will it prevent SELECT FOR NO KEY UPDATE, SELECT FOR SHARE, or SELECT FOR KEY SHARE. You can track the progress on the enhancements to FOR NO KEY UPDATE and FOR KEY SHARE with this GitHub issue.

New Documentation, Blogs, Tutorials, and Videos

New Blogs

- YugabyteDB Engineering Update – Jan 29, 2020

- Distributed SQL vs. NewSQL

- How to Migrate the Sakila Database from MongoDB to Distributed SQL with Studio 3T

- Four Data Sharding Strategies We Analyzed in Building a Distributed SQL Database

- Four Compelling Use Cases for PostgreSQL Table Functions

New Videos

- Developing Cloud-Native Spring Microservices with a Distributed SQL Backend

- How to Upgrade a Local YugabyteDB Cluster

- YugabyteDB Operational Best Practices

- Getting Started with Local Installs, Sample Databases and Tools

- Getting Started with PostgreSQL’s Authentication and Authorization

New and Updated Docs

- DbSchema

- FAQ: What is the difference between ysqlsh and psql?

- FAQ: Why is consistent hash sharding the default sharding strategy?

Upcoming Meetups and Conferences

PostgreSQL Meetups

- Feb 20: Silicon Valley PostgreSQL Meetup

Distributed SQL Webinars

Conferences

- Postgres Conference, March 23-27, 2020, New York

- KubeCon + CloudNativeCon Europe, March 30 – April 2, 2020, Amsterdam

- Google Cloud Next, April 6-8, 2020, San Francisco

- Red Hat Summit, April 27-29, 2020, San Francisco

We Are Hiring!

YugaByte is growing fast and we’d like you to help us keep the momentum going! Check out our currently open positions:

- Frontend Engineer – Sunnyvale, CA

- Software Engineer – Cloud Infrastructure – Sunnyvale, CA

- Software Engineer – Core Database – Sunnyvale, CA

- Software Engineer – Full Stack – Sunnyvale, CA

- Developer Advocate – Sunnyvale, CA

Our team consists of domain experts from leading software companies such as Facebook, Oracle, Nutanix, Google and LinkedIn. We have come a long way in a short time but we cannot rest on our past accomplishments. We need your ideas and skills to make us better at every function that is necessary to create the next great software company. All while having tons of fun and blazing new trails!

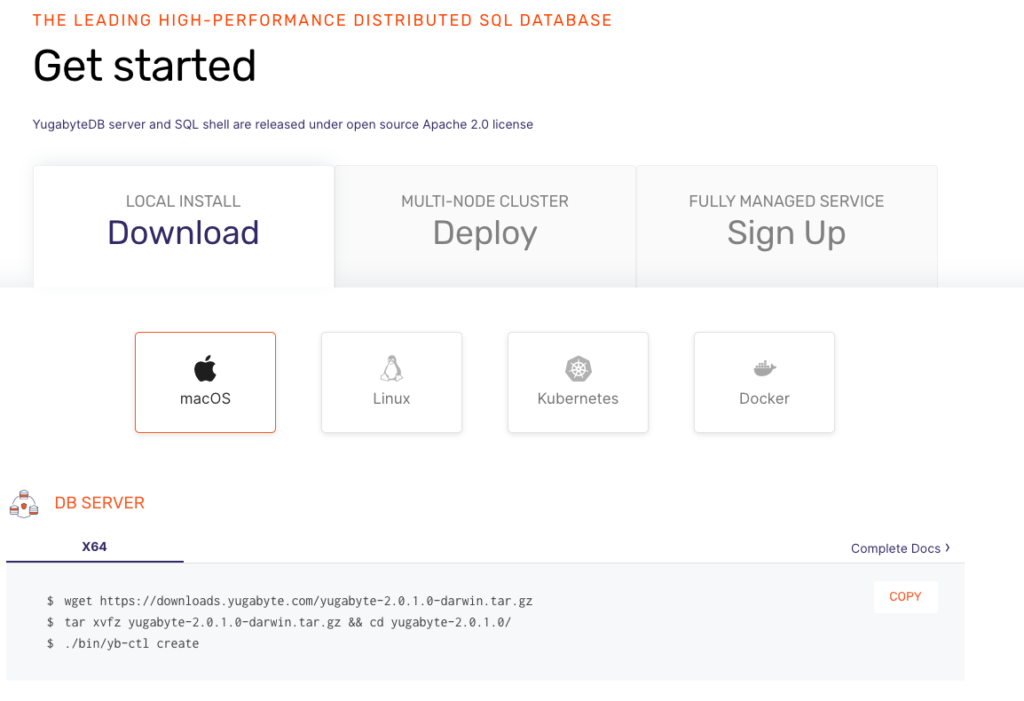

Get Started

Ready to start exploring YugabyteDB features? Getting up and running locally on your laptop is fast. Visit our quickstart page to get started.

What’s Next?

- Compare YugabyteDB in depth to databases like CockroachDB, Google Cloud Spanner and MongoDB.

- Get started with YugabyteDB on macOS, Linux, Docker and Kubernetes.

- Contact us to learn more about licensing, pricing or to schedule a technical overview.